Neural networks and the development of image recognition applications

Usually at Opscidia, we talk a lot about text. Today, we’re going to change a bit and talk about images. Why? Automatic image analysis is based on the same technologies (neural networks) as automatic text analysis. This field is a few years ahead of automatic text analysis, so we’ve been looking at it a lot for inspiration. So we’ll share with you some of what we’ve learned !

Image recognition is a constantly evolving field, which has seen many advances in recent years thanks to the development of artificial intelligence and neural networks. Indeed, neural networks are machine learning algorithms that are particularly suited to image recognition, as they can learn to recognise complex patterns from training data.

Summary :

- What is a neural network and how does it recognise images?

- The evolution of neural networks for image recognition over time.

- The advantages and disadvantages of using neural networks for image recognition

- The different challenges to overcome in developing applications for image recognition

- Examples of the use of neural networks for image recognition

1. What is a neural network and how does it recognize images ?

A neural network consists of layers of interconnected neurons that are trained to process input data and produce accurate results. For image recognition, the input data are pixelated images, and the output is predicted labels for each image. For example, a neural network can be trained to recognise dogs in images, and when it receives a new image as input, it predicts whether that image contains a dog or not.

There are different types of neural networks that can be used for image recognition, but the best known is the convolutional neural network (CNN). CNNs are particularly suited to image recognition, as they are designed to process data that has a spatial structure, such as images. CNNs are made up of layers of neurons that are designed to extract features from the image, such as lines or shapes, and combine them to predict a label.

2. The evolution of neural networks over time

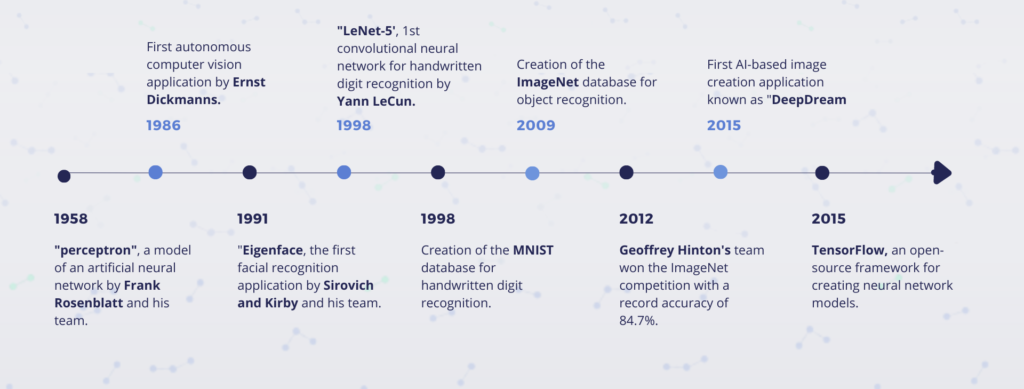

Artificial neural networks were developed in the 1950s, but their use in image recognition did not really begin until the late 1980s.

1958: Electronic engineer Frank Rosenblatt develops the first “perceptron”, a model of an artificial neural network

1986: Ernst Dickmanns and his team at the Bundeswehr University Munich in Germany developed the first autonomous computer vision application. They developed a computer vision system to enable a car to drive autonomously. The system used video cameras to capture images of the road and image processing algorithms to identify obstacles and calculate the optimal trajectory for the car.

1991: The first facial recognition application is called “Eigenface” and was developed in 1991 by Sirovich and Kirby and used by Matthew Turk and Alex Pentland for face classification. However, this was a face recognition application based on principal component analysis techniques rather than the neural networks used today.

1998: Yann LeCun and his colleagues at New York University develop LeNet-5, a convolutional neural network model for handwritten digit recognition.

Yann LeCun developed convolutional neural networks (ConvNets) to recognise handwritten numbers on cheques for the AT&T Bell Labs research department. In the 1990s, image recognition with ConvNets stagnated due to the lack of training data and the limited computing power of computers at the time.

1998: Creation of the MNIST database for handwritten digit recognition

2009: Creation of the ImageNet database for object recognition.

These databases allowed researchers to collect and annotate large numbers of images, enabling more complex neural networks to be trained.

2012: Geoffrey Hinton’s research team at the University of Toronto uses a neural network architecture called the Deep Convolutional Neural Network (DCNN) to win the ImageNet competition with a record accuracy of 84.7%.

This victory marked the beginning of a new era for image recognition, where neural networks began to outperform conventional algorithms in many computer vision tasks.

2015: Google introduces TensorFlow, an open-source framework for creating neural network models, which will make it easier to train and use neural network models.

2015 : The first AI image creation application known as “DeepDream” was developed by Alexander Mordvintsev in 2015 at Google. DeepDream uses convolutional neural networks to generate original images from a given source image.

3. Advantages and disadvantages of using neural networks for image recognition

There are several advantages to using neural networks for image recognition. Firstly, they are able to process high resolution images and recognise complex patterns that would be difficult for a human to detect. Secondly, they are able to learn autonomously from training data, which means that they can adapt to new images without the need for explicit programming. Finally, they are often faster and more accurate than traditional image recognition approaches, such as feature-based classifiers.

4. Various challenges to overcome in developing applications for image recognition

There are, however, some challenges to overcome in the development of image recognition applications based on neural networks. Firstly, they often require a large amount of training data to achieve satisfactory performance. If you do not have enough training data, your model may not be robust enough to generalise to new images. Secondly, they can be expensive to train, especially if you use a GPU (graphics processing unit) to speed up the process. Finally, they can be difficult to understand and interpret, which can be a challenge for some applications that require an explanation of predictions.

Despite these challenges, neural network image recognition remains a very promising technology, which is used in many applications, including health, security, advertising and entertainment. It opens up new possibilities for visual information processing and better user experiences in many areas.

5. Examples of the use of neural networks for image recognition

- DALL-E not Open AI: DALL-E is an artificial intelligence program that generates images from text descriptions. It uses a version of the GPT-3 model with 12 billion parameters to interpret the inputs and produce the requested images. The objects created can be both realistic and non-existent, for example a goat on top of Mount Blanc, a hybrid between a hippopotamus and a chicken or a car of the future…

- Text to Art by MidJourney: AI-based Text-to-Art generators create realistic or artistic images from text. These systems are powered by advanced artificial intelligence models with billions of parameters and trained on billions of images.

- Facial recognition is a biometric technology that uses deep learning algorithms to map human facial features to create a facial impression. These models are trained on a large number of images of humans on the web, and then the facial recognition system locates and compares facial features to identify a person from an image. In France, only facial recognition by the police and gendarmerie is authorised through the TAJ (Treatment of Judicial Records). This database contains 18 million people, 8 million of whom have photos. Facial recognition therefore allows French police forces to find individuals registered in the TAJ thanks to images or videos captured, for example, during public demonstrations. Real-time facial recognition is not permitted under French law.

In summary, neural networks are a powerful tool for image recognition, which have seen many advances in recent years thanks to artificial intelligence. They are particularly suited to recognising complex patterns in high-resolution images, and are used in many applications to improve user experiences. However, they require a significant amount of training data and can be expensive to train, and can be difficult to understand and interpret. Despite these challenges, they remain a very promising technology that opens up new possibilities for visual information processing.

Learn More

Go to chapter